Artificial Stupidity: Learning To Trust Artificial Intelligence (Sometimes)

Posted on

A young Marine reaches out for a hand-launched drone.

In science fiction and real life alike, there are plenty of horror stories where humans trust artificial intelligence too much. They range from letting the fictional SkyNet control our nuclear weapons to letting Patriots shoot down friendly planes or letting Tesla Autopilot crash into a truck. At the same time, though, there’s also a danger of not trusting AI enough.

As conflict on earth, in space, and in cyberspace becomes increasingly fast-paced and complex, the Pentagon’s Third Offset initiative is counting on artificial intelligence to help commanders, combatants, and analysts chart a course through chaos — what we’ve dubbed the War Algorithm (click here for the full series). But if the software itself is too complex, too opaque, or too unpredictable for its users to understand, they’ll just turn it off and do things manually. At least, they’ll try: What worked for Luke Skywalker against the first Death Star probably won’t work in real life. Humans can’t respond to cyberattacks in microseconds or coordinate defense against a massive missile strike in real time. With Russia and China both investing in AI systems, deactivating our own AI may amount to unilateral disarmament.

Abandoning AI is not an option. Never is abandoning human input. The challenge is to create an artificial intelligence that can earn the human’s trust, a AI that seems transparent or even human.

Robert Work

Tradeoffs for Trust

“Clausewitz had a term called coup d’oeil,” a great commander’s intuitive grasp of opportunity and danger on the battlefield, said Robert Work, the outgoing Deputy Secretary of Defense and father of the Third Offset, at a Johns Hopkins AI conference in May. “Learning machines are going to give more and more commanders coup d’oeil.”

Conversely, AI can speak the ugly truths that human subordinates may not. “There are not many captains that are going to tell a four-star COCOM (combatant commander) ‘that idea sucks,'” Work said, “(but) the machine will say, ‘you are an idiot, there is a 99 percent probability that you are going to get your ass handed to you.’”

Before commanders will take an AI’s insights as useful, however, Work emphasized, they need to trust and understand how it works. That requires intensive “operational test and evaluation, where you convince yourself that the machines will do exactly what you expect them to, reliably and repeatedly,” he said. “This goes back to trust.”

Trust is so important, in fact, that two experts we heard from said they were willing to accept some tradeoffs in performance in order to get it: A less advanced and versatile AI, even a less capable one, is better than a brilliant machine you can’t trust.

Army command post

The intelligence community, for instance, is keenly interested in AI that can help its analysts make sense of mind-numbing masses of data. But the AI has to help the analysts explain how it came to its conclusions, or they can never brief them to their bosses, explained Jason Matheny, director of the Intelligence Advanced Research Projects Agency. IARPA is the intelligence equivalent of DARPA, which is running its own “explainable AI” project. So, when IARPA held one recent contest for analysis software, Matheny told the AI conference, it barred entry to programs whose reasoning could not be explained in plain English.

“From the start of this program, (there) was a requirement that all the systems be explainable in natural language,” Matheny said. “That ended up consuming up about half the effort of the researchers, and they were really irritated….because it meant they couldn’t in most cases use the best deep neural net approaches to solve this problem, they had to use kernel-based methods that were easier to explain.

Compared to cutting edge but harder-to-understand software,” Matheny said, “we got a 20-30 percent performance loss… but these tools were actually adopted. They were used by analysts because they were explainable.”

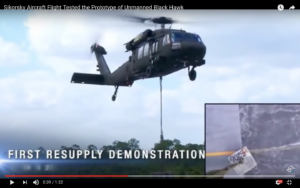

Transparent, predictable software isn’t only importance for analysts: It’s also vital for pilots, said Igor Cherepinsky, director of autonomy programs at Sikorsky. Sikorsky’s goal for its MATRIX automated helicopter is that the AI prove itself as reliable as flight controls for manned aircraft, failing only once in a billion flight hours. “It’s the same probability as the wing falling off,” Cherepinsky told me in an interview. By contrast, traditional autopilots are permitted much higher rates of failure, on the assumption a competent human pilot will take over if there’s a problem.

Sikorsky’s experimental unmanned UH-60 Black Hawk

To reach that higher standard — and just as important, to be able to prove they’d reached it — the Sikorsky team ruled out the latest AI techniques, just as IARPA had done, in favor of more old-fashioned “deterministic” programming. While deep learning AI can surprise its human users with flashes of brilliance — or stupidity — deterministic software always produces the same output from a given input.

“Machine learning cannot be verified and certified,” Cherepinsky said. “Some algorithms (in use elsewhere) we chose not to use… even though they work on the surface, they’re not certifiable, verifiable, and testable.”

Sikorsky has used some deep learning algorithms in its flying laboratory, Cherepinsky said, and he’s far from giving up on the technology, but he doesn’t think it’s ready for real world use: “The current state of the art (is) they’re not explainable yet.”

Robots With A Human Face

Explainable, tested, transparent algorithms are necessary but hardly sufficient to making an artificial intelligence that people will trust. They help address our rational concerns about AI, but if humans were purely rational, we might not need AI in the first place. It’s one thing to build AI that’s trustworthy in general and in the abstract, quite another to get actual individual humans to trust it. The AI needs to communicate effectively with humans, which means it needs to communicate the way humans do — even think the way a human does.

“You see in artificial intelligence an increasing trend towards lifelike agents and a demand for those agents, like Siri, Cortana, and Alexa, to be more emotionally responsive, to be more nuanced in ways that are human-like,” David Hanson, CEO of Hong Kong-based Hanson Robotics, told the Johns Hopkins conference. When we deal with AI and robots, he said, “intuitively, we think of them as life forms.”

David Hanson with his Einstein robot.

Hanson makes AI toys like a talking Einstein doll and expressive talking heads like Han and Sophia, but he’s looking far beyond such gadgets to the future of ever-more powerful AI. “How can we, if we make them intelligent, make them caring and safe?” he asked. “We need a global initiative to create benevolent super intelligence.”

There’s a danger here, however. It’s called anthropomorphization, and we do it all the time. People chronically attribute human-like thoughts and emotions to our cats, dogs, and other animals, ignoring how they are really very different from us. But at least cats and dogs — and birds, and fish, and scorpions, and worms — are, like us, animals. They think with neurons and neurotransmitters, they breathe air and eat food and drink water, they mate and breed, are born and die. An artificial intelligence has none of these things in common with us, and programming it to imitate humanity doesn’t make it human. The old phrase “putting lipstick on a pig” understates the problem, because a pig is biochemically pretty similar to us. Think instead of putting lipstick on a squid — except a squid is a close cousin to humanity compared to an AI.

With these worries in mind, I sought out Hanson after his panel and asked him about humanizing AI. There are three reasons, he told me: Humanizing AI makes it more useful, because it can communicate better with its human users; it makes AI smarter, because the human mind is the only template of intelligence we have; and it makes AI safer, because we can teach our machines not only to act more human but to be more human. “These three things combined give us better hope of developing truly intelligent adaptive machines sooner and making sure that they’re safe when they do happen,” he said.

This squid’s thought process is less alien to you than an artificial intelligence would be.

Usefulness: On the most basic level, Hanson said, “using robots and intelligent virtual agents with a human-like form makes them appealing. It creates a lot of uses for communicating and for providing value.”

Intelligence: Consider convergent evolution in nature, Hanson told me. Bats, birds, and bugs all have wings, although they grow and work differently. Intelligence may evolve the same way, with AI starting in a very different place from humans but ending up awfully similar.

“We may converge on human level intelligence in machines by modeling the human organism,” Hanson said. “AI originally was an effort to match the capacities of the human mind in the broadest sense, (with) creativity, consciousness, and self-determination — and we found that that was really hard, (but still) there’s no better example of mind that we know of than the human mind.”

Safety: Beyond convergent evolution is co-evolution, where two species shape each other over time, as humans have bred wolves into dogs and irascible aurochs into placid cows. As people and AI interact, Hanson said, “people will naturally select for features that desirable and can be understand by humans, which then puts a pressure on the machines to get smarter, more capable, more understanding, more trustworthy.”

Sorry, real robots won’t be this cute and friendly.

By contrast, Hanson warned, if we fear AI and keep it at arm’s length, it may develop unexpectedly deep in our networks, in some internet backbone or industrial control system where it has not co-evolved in constant contact with humanity. “Putting them out of sight, out of mind, means we’re developing aliens,” he said, “and if they do become truly alive, and intelligent, creative, conscious, adaptive, but they’re alien, they don’t care about us.”

“You may contain your machine so that’s it safe, but what about your neighbor’s machine? What about the neighbor nation’s? What about some hackers who are off the grid?” Hanson told me. “I would say it will happen, we don’t know when. My feeling is that if we can there first with a machine that we can understand, that proves itself trustworthy, that forms a positive relationship with us, that would be better.”

Click to read the previous stories in the series:

Artificial Stupidity: When Artificial Intelligence + Human = Disaster

Artificial Stupidity: Fumbling The Handoff From AI To Human Control

Subscribe to our newsletter

Promotions, new products and sales. Directly to your inbox.