Special Ops Using Army’s Prototype 3D Maps On Missions: Gervais

Posted on

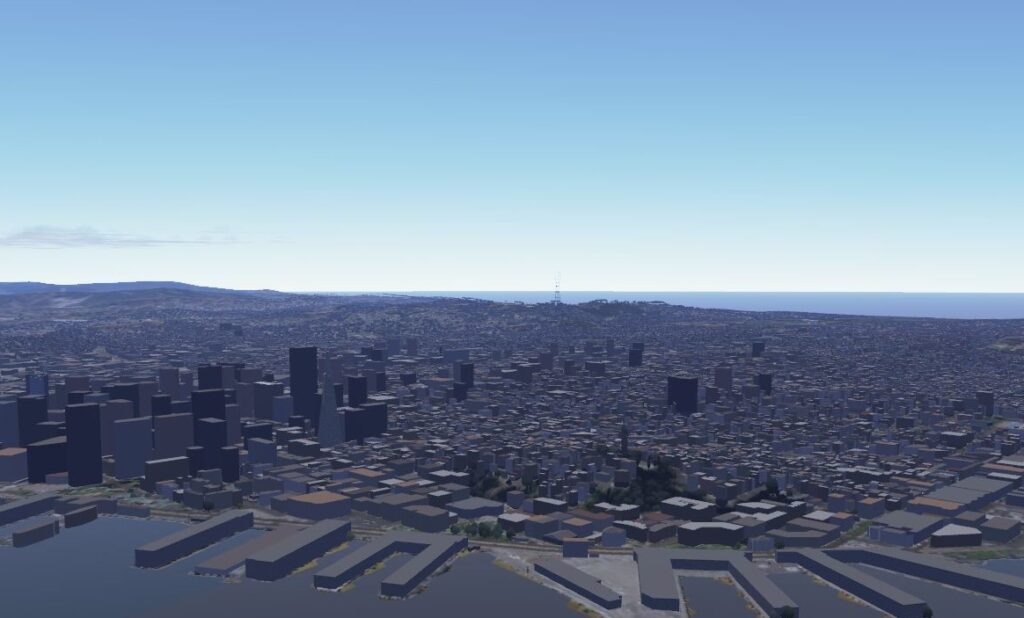

An early version of One World Terrain, the Army’s attempt at a 3D digital world map detailed enough for training simulations and mission planning.

WASHINGTON: The Army’s One World Terrain software was intended to build 3D battle maps for training simulations. But an early version has already proven so useful that special operators are using it to plan actual missions, the head of the Army’s Synthetic Training Environment task force told us.

“We have many SOF elements —Naval Special Warfare and also Army SOF—that are using it,” Maj. Gen. Maria Gervais told me. Some regular Army divisions and Marine Corps battalions are also using it for advance planning and map exercises before they deploy to wargames such as Pacific Pathways, she said, but “the priority goes to the operational team, to our deployed forces.”

Maj. Gen. Maria Gervais

The operators use a tablet and special software to designate an area of interest, dispatch a drone to scan it, and then – in a matter of hours – automatically compile the sensor readings into a 3D map so detailed you can even distinguish different species of trees.

The 3D software lets operators plan potential routes of advance to avoid detection or match munitions with targets to limit collateral damage, Gervais said. “They’re actually using an application that allows them to say, ‘okay, here I am, here’s the route I’m going to take. Let me drop the [estimated position of the] enemy in. Can the enemy see me? Can they not see me? What is the best route?’ They can do line-of-sight analysis, they can do route analysis. They can also do, for example, battle damage [assessment]. If I’m going to deploy this ordinance on, let’s just say a building, it can tell them where the minimal safe distance is.”

“The soldiers on the ground are finding a way to say, ‘this is value added,’” she told me. “So we’re continuously collecting the data and saying, ‘okay, what else could 3D terrain be used for?’”

The ultimate goal for One World Terrain, Gervais said, is to provide detailed 3D maps of anywhere the US military might need to train, deploy, or fight. It would be a single database for use by all future Army training systems, in contrast to the 57 inconsistently compatible terrain formats used today. In one particularly glitchy simulation, helicopter pilots thought they were hiding behind cover, but the terrain they were hiding behind didn’t show up on the screens of the other side’s anti-aircraft gunners, who shot the choppers down.

The foundational layer for One World Terrain will come from existing detailed and carefully verified databases compiled by organizations like the National Geospatial Intelligence Agency and the Army Geospatial Center. To underline the collaboration, officials from both NGA and AGC will appear alongside Gervais on a panel Tuesday at the AUSA 2019 conference.

“We’re trying to capitalize on stuff that is already out there because we don’t want to reinvent it,” Gervais said. “That basic information kind of already exists, so we would bring it in and put our layers on top of it,” she said — turning the 2D maps into 3D terrain complete with trees, buildings with mapped interiors, and underground areas like subway tunnels.

But if a location a unit needed to study wasn’t already mapped in adequate detail, or if the area had been altered by new construction, natural disaster, or battle damage, a satellite or drone could be tasked to take a closer look and update the database. For example, she said, “we’ve already seen technology that can actually go and scan the inside of a building very quickly and put it into our mission control platform.”

There’s no endpoint to this effort, Gervais said. It’s a continuous process of collecting and processing ever more data from intelligence, reconnaissance, and surveillance (ISR) assets around the world.

That is a staggering amount of data, Gervais warned. It will take years of work to develop artificial intelligence powerful enough to process it and a wireless network robust enough to distribute it to units at bases and forward outposts around the world.

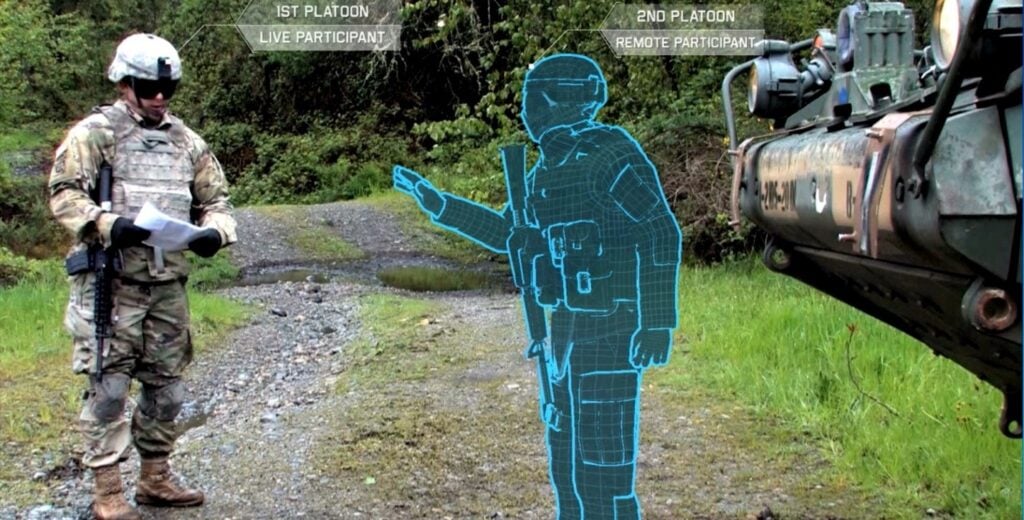

An Army soldier interacts with a virtual comrade in an “augmented reality” simulation.

Really Big Data

The task goes far beyond the Synthetic Training Environment team. “The future really is going to be about the ability to collect the data, process the data, use AI/machine learning as quickly as possible to help decision makers, and distribute it,” Gervais said. “There’s a Department [of Defense]-wide effort to take a look at data management. We’re part of that effort.”

Within the Army itself, Gervais’s Synthetic Training Environment Cross Functional Team has to work with four other CFTs developing new long-range artillery, armored vehicles, aircraft, missile defense systems, and soldier equipment. That’s because STE will provide training simulations for all of them, even running as an augmented-reality overlay on individual soldier’s IVAS goggles.

She needs to work with the CFT developing Assured Precision Navigation & Timing alternatives to GPS – which can be hacked, jammed, or spoofed – to ensure everyone is located correctly on the digital maps at all times.

And, above all, she must collaborate closely with the CFT developing the new Army command, control, and communications network, over which her map data must flow. As one sign of that closeness, the Network CFT chief, Maj. Gen. Peter Gallagher, will also appear alongside Gervais at the STE panel during AUSA.

Army signal soldiers set up a portable antenna for their VSAT (Very Small Aperture Terminal) communications system.

Together, Gervais told me, “one of the most exciting things we just kicked off is Monday, we started what I’m calling our STE-Network Integration pilot.” That’s a test network to send trial loads of STE data between dispersed locations. “We’re actually taking a look at collecting the data, pushing it across this network, and then analyzing that to see how much did it take, how much latency was there,” she said, “so we can better understand what we need to do and what we’re capable of doing.”

It’s up to Gallagher and his team to make the network as robust as possible, able to transmit large quantities of data – from Gervais’s 3D maps to updates on units’ maintenance and ammo needs – even to frontline units whose communications are being jammed. It’s up to Gervais and her team to make her data as compact and efficient as possible so it’s easier for the network to transmit.

“We’re using advanced machine learning, artificial intelligence, and also other technologies to automate the processes for collection, storage, distribution, in order to minimize both that data footprint and the impact to our Army network,” Gervais said. “Working with our industry and academia partners, we’re looking at what are the ways that you can manage that data so as you collect it, for example, you’re only sending the data that you need.”

Gervais sees considerable potential in what’s called edge computing: Instead of sensors having to transmit video feeds and other raw data back to central command posts or data centers for processing, exploit Moore’s Law to put ever-more-compact processors on the sensors themselves so they can do much of that processing themselves and only transmit the slimmed-down end product.

Another way to avoid being deluged by data is to limit your appetite for it, depending on the task. “We don’t want perfection to be our enemy,” Gervais told me. “What things do we absolutely have to get right, for what feature, and what reason?”

“If you’re in an aircraft, you don’t necessarily need to see high levels of fidelity” about every obstacle on the ground, she said. A ground vehicle simulator or route-planning software would need considerably higher fidelity, but it wouldn’t need to show you, say, the floor plans inside buildings. For infantry and special operators rehearsing actual combat missions, however, “that’s where the data needs to be as realistic as possible,” she said. “You don’t want to be practicing [in VR] and get there and find out the doorknob is on the opposite side of where you thought it was.”

Subscribe to our newsletter

Promotions, new products and sales. Directly to your inbox.